Fragmented rules and AI push banks to overhaul controls

Governance gaps and rising cyber risks force tighter oversight as AI scales.

Banks are entering 2026 under mounting pressure to balance rapid artificial intelligence adoption with tightening regulation and rising systemic risks, as governance gaps emerge as a central fault line in the industry.

Matthew Driver, Executive Vice President, Head of Services Asia Pacific of Mastercard said banks are operating in an increasingly difficult environment shaped by profitability pressure and external shocks. “We've got margin compression, increased competition for banks, evolving credit and funding risks,” he said, adding that rapid technological and regulatory change is unfolding alongside “rising geoeconomic and cyber threats.”

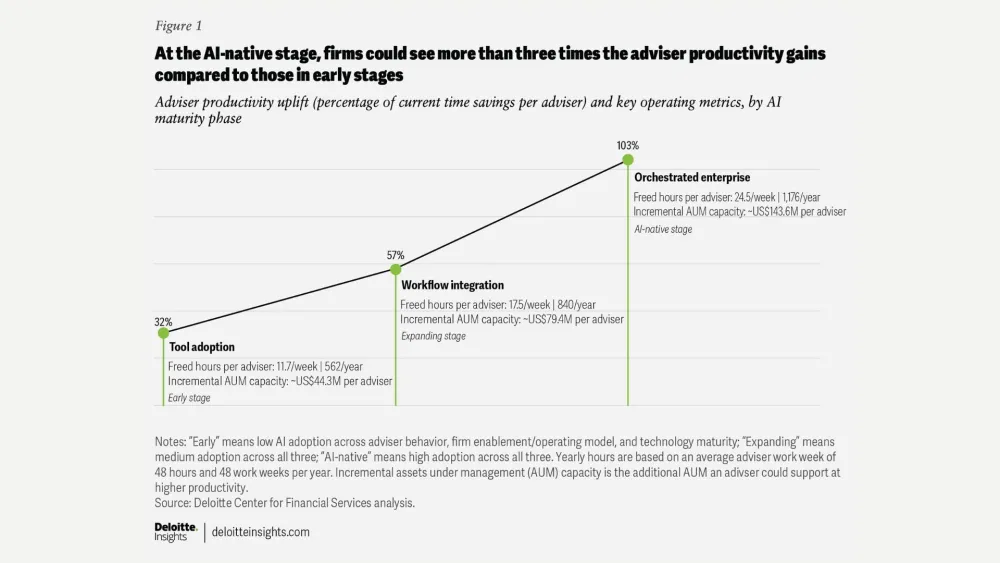

At the same time, Basil Hwang, Managing Partner at Hauzen LLP said AI adoption is accelerating across the financial sector. “Banks should experience cost savings from adopting AI in backroom and back office functions, but also in customer facing functions,” he said. But those gains could also reshape employment. Hwang warned that adoption “could also mean a reduction in headcount,” forcing workers to retool and become proficient in AI tools.

The deeper concern is that governance is not keeping pace with deployment. Driver said, “AI has been moving faster than governance in many institutions,” and boards are increasingly asking whether that gap itself has become a business risk.

He said regulators are now focused on governance frameworks, citing Singapore’s proposed AI Risk Management Guidelines and Hong Kong’s expanding Gen AI sandbox. “Governance is a key component,” he said, stressing that it must be “intentional and foundational, embedded across the AI life cycle.”

Cybersecurity risks are compounding the issue. Hwang said exposure is already significant, pointing to “a significant hack of a popular AI tool” that enabled a virus to drain API keys and crypto wallet information. “The cybersecurity risk with AI, especially when you're using generative AI is very real,” he said, warning that agentic AI also increases the risk of hacking, data leakage and privacy breaches.

Driver said digital activity is expanding faster than many banks can safely manage. “Digital transactions are going to double, but not all banks are ready for AI and machine learning,” he said. To stay ahead, he argued, banks must “modernize their risk, compliance and control environments” while placing governance at the centre of innovation.

Hwang said banks must also be more disciplined about implementation. Because financial institutions remain prime targets for bad actors, he said they need to “carefully audit any AI tools that they put in place” and consider retraining staff rather than replacing them entirely.

Together, Driver and Hwang point to the same conclusion: for banks in 2026, the real challenge is no longer whether to adopt AI, but whether they can do so without exposing themselves to governance, cyber and regulatory failures.

Advertise

Advertise

Commentary

Asian firms need to get ready for digital assets and currencies

AI can build your plan, but can it hold you to it?

Built to last: How Japan is approaching the cross-border payments challenge

Asia’s 20% advantage: The simplification strategy rewriting banking from Singapore to Shanghai